Bringing On-device Generative AI to the PI: When and why you’ll need the Raspberry PI AI HAT+ 2

To watch the On-device GenAI on Raspberry PI AI HAT+ 2 with Hailo-10H demo, click here

The Raspberry Pi AI HAT+ 2 is the official GenAI PCIe add-on for Raspberry Pi 5, released on January 15th 2026. It pairs a Hailo-10H AI accelerator with up to 40 TOPS of inference performance (INT4), and 8 GB of onboard LPDDR4X memory, enabling local vision and small GenAI workloads on one of the most popular single-board computers ever made.

This hardware combination is designed to enable on-device generative AI. This means it can operate within edge device requirements: low power consumption, no cloud connectivity for the highest availability, low latency, and maximum data privacy. As with any embedded hardware, performance trade-offs matter: edge devices are constrained in memory, compute resources and power budget (typically single-digit W).

For this reason, generative AI applications that require general world awareness, conversations based on very large context and knowledge-heavy reasoning or learning are better suited to run in the cloud. For latency-sensitive, privacy-critical, knowledge-confined applications, the new AI HAT+ 2 is an ideal fit.

Let’s break down when and where the AI HAT+ 2 makes sense, and why it’s not just another niche gadget.

When AI HAT+ 2 Really Excels

The AI HAT+ 2 is strongest in workloads that are compute-heavy up front, not workloads dominated by token-by-token (TBT) generation. In practice, this means it shines when you need the Raspberry Pi’s CPU available and responsive while running generative AI applications with the following profiles:

- Fast execution of encoders – turning visual, audio or text input into prompt embedding

- Short time to first token (TTFT) – when interactivity and user experience are critical

Raspberry Pi 5 CPU |

Hailo-10H |

|

Time to First Token* (TTFT), |

2039 ms |

320ms |

* 96 prefill tokens, measured on the CPU using llama.ccp

- Large prefill – when ingesting fresh data, in which the input context is larger than the output response

- Multi-stage pipelines – when sequential processing is needed, in which the output of one model becomes the input of the next

Ideal Use Cases

Vision-Language Models (VLMs)

VLMs map naturally to the AI HAT+ 2 strengths, because the image encoder is a high-compute stage that produces a compact token embedding as output. The Hailo-10H enables event triggering, logging, indexing, captioning, and smart search using free text, using a 2B-parameter model, which would be prohibitively slow to run on the Pi’s CPU alone.

We can think of innumerable applications in home security and surveillance, for example: notify me when my child is back from school, turn the alarm off when a package is delivered, send a log of pet monitoring meaningful events at the end of each day, etc., but also for QA, healthcare, industrial automation, and more.

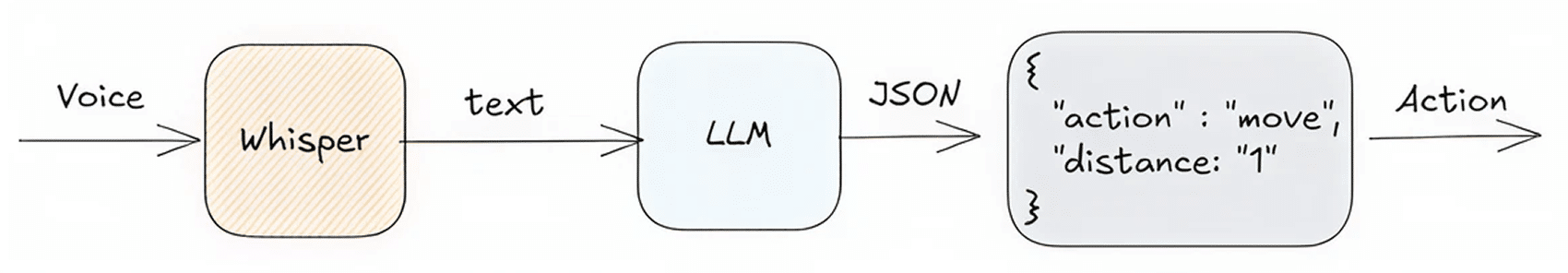

Voice to Action

Another strong fit for the AI HAT+ 2 is a local voice-to-action agent, because it combines high-compute inference with relatively low-bandwidth interaction. These workflows often rely on a large prefill step, i.e., processing a big, changing input context before generating a short response, which can be much slower on the Raspberry Pi CPU alone. This is especially useful for agents that continuously ingest fresh data (sensor readings, device state, logs, schedules, recent events) and then respond with a short command or action locally.

The full sequential pipeline first converts free speech to text using a Whisper-class model, after which a small LLM handles intent understanding, decision-making, and natural free-text interaction, triggering real-world actions locally and reliably. This architecture enables agentic AI and physical AI at the edge, through larger Whisper models that improve accuracy, supporting responsive, privacy-preserving, low-cost, and real-time voice control for a seamless user experience.

Also, here there are endless applications. For example, local voice-to-action enables natural, menu-free control of devices, eliminating the need to navigate between elaborate menus and submenus, or flipping through tedious manuals. Another example could be intuitive wayfinding and navigation in public spaces such as shopping centers, terminals and campuses, by stating using free text what you want to do rather than the exact location you need to find (where can I find sunglasses? how do I get to the cafeteria? Or, where is my gate?). In robotics and industrial systems voice-to-action can lead to more responsive human-machine interaction and seamless cooperation between man and machine.

Advanced Vision Applications

When it comes to demanding vision workloads, the AI HAT+ 2 enables a step change in performance, in which high compute power and efficient on-device execution translate directly into large performance gains, as much as 100% faster than the previous AI HAT+. The Hailo-10H accelerates large CNNs and Transformer-based vision models, including CLIP, zero-shot detection, and high-capacity object detectors, enabling richer perception without increasing bandwidth or power. This makes it possible to build physical AI systems that combine multiple vision stages: detection, embedding, semantic matching, and reasoning, entirely at the edge, unlocking more capable and responsive applications in home automation, security, robotics, retail, industrial automation, and other use cases, in which no cloud connectivity means no data leaves the device and there are no network lags or costs.

Example Use Cases That Really Play to the new AI HAT+2 Strengths

Use Case |

Why It Works |

Offline Home Automation & Robotics |

Enables free text operation without cloud dependency |

Home Security |

Small language outputs for event triggering, captioning and summarization on top of real-time vision |

Secure Industrial Monitoring |

Air-gapped generative summarization of logs & sensor data |

Information kiosk in public places |

Natural speech, zero-queue interaction with information agents |

Bottom Line: Don’t ask your toaster for history lessons…

The Raspberry Pi AI HAT+ 2 isn’t a competition for cloud inference. Large LLMs will always run better where compute and memory are effectively unconstrained. However, for edge scenarios that value privacy, offline operation, low latency, low power consumption or battery operation, it unlocks real capabilities that weren’t feasible on the Raspberry Pi platform before, with or without the original AI HAT+.

You will make the best use of it when you need on-device tightly scoped generative tasks alongside vision or real-world sensor input, and when the alternative is cloud dependency or far larger / more expensive hardware.

The robust Hailo Community with thousands of active developers, and the upcoming integrations with Frigate and Home Assistant, make the Hailo-empowered AI HAT+ 2 the most attractive choice for anyone looking to make their first steps in physical AI and home automation.