The Revolution of Physical Intelligence Belongs at the Edge

Over the past several years, artificial intelligence has progressed through a series of distinct phases. Early AI systems focused on prediction and object recognition. Generative AI then introduced the ability to create new content, from text to images to code. More recently, agentic AI systems have begun coordinating complex digital tasks across software systems.

Throughout all of these stages, however, the output of AI has remained fundamentally digital.

The next step in this evolution is physical AI – systems that do not just generate digital output, but interact directly with the physical world.

Until recently, AI in robotics was primarily about perception. Machines could see, hear, and interpret the environment through sensors such as cameras and microphones. Vision models detected objects, audio models recognized speech, and AI systems fed perception data into mostly rule-based control systems.

Physical AI moves beyond this model. Instead of only interpreting the environment, machines must use AI to perceive their surroundings, reason about what they observe, and take actions that affect the real world. That requirement fundamentally changes how AI systems must operate, because decisions and corresponding actions must be made instantly as the physical world continues to move.

The Sense-Think-Act Loop

Robotics systems have long relied on sensors such as cameras, microphones, and other devices to collect information about their environment. AI models have dramatically improved the ability of machines to interpret that information, enabling advances in areas such as computer vision and speech recognition.

In many earlier systems, however, perception was only one step in a largely sequential process. Once information was collected and interpreted, actions were typically executed through predefined rules. By nature, physical AI requires a different model.

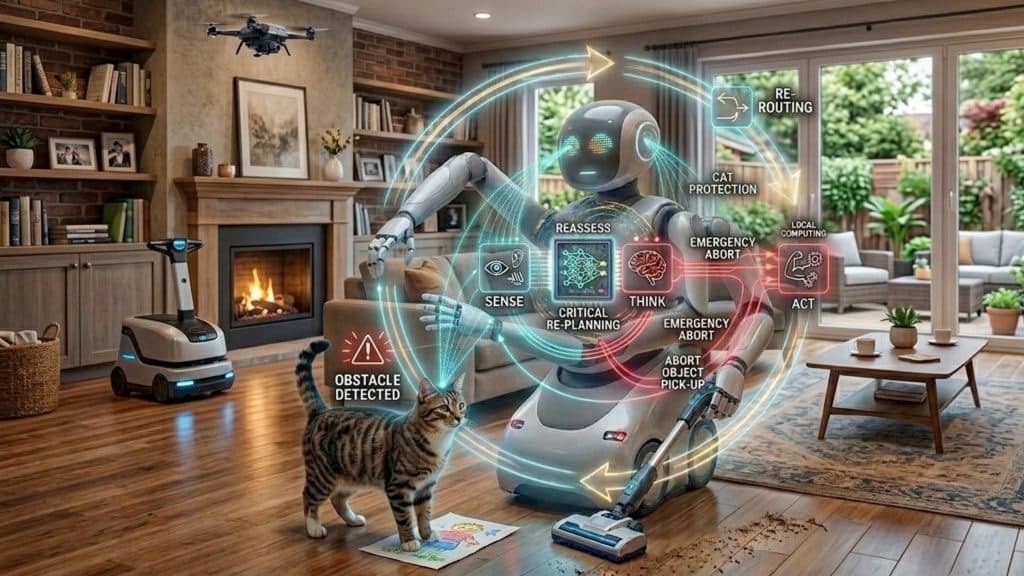

Rather than operating as a linear pipeline in which sensing happens first and action follows later, machines must run a tightly coupled feedback loop where perception, reasoning, and actuation happen continuously and simultaneously, with constant feedback from the environment. A system observes its surroundings, decides how to respond, and acts immediately. In this sense-think-act loop the robot sees, acts, observes the consequences of its action, and adjusts over and over again, many times per second. This non-stop feedback loop is what allows robots to behave safely and effectively in dynamic, unpredictable environments

Consider a household cleaning robot that identifies a piece of paper on the floor and moves to pick it up. Just as the robot reaches for the paper, a cat steps onto it. The robot must instantly reassess the situation and adjust its behavior to avoid harming the cat or damaging the environment.

In scenarios like this, there is no room for network delays, dropped connections, or round-trip latency to the cloud. The system must react immediately to changes in its surroundings.

Why Physical AI Requires the Edge

Cloud infrastructure remains essential to the broader AI ecosystem, enabling large-scale model training, data aggregation and system optimization. However, when a machine interacts directly with the physical world, the decision loop cannot depend on a network round trip. Latency, connectivity gaps, or unpredictable delays cannot be part of a control system responsible for physical actions.

For real-time interaction with the physical environment, the intelligence driving the sense-think-act loop must run locally. In other words, it must run at the edge.

Edge and cloud are therefore not competing architectures – they are complementary layers of the same system. The cloud trains and improves models and processes massive datasets. The edge executes the real-time intelligence that allows machines to perceive, reason, and act in the moment.

While physical intelligence ultimately requires both, the moment of action belongs at the edge.

The Role of Task-Specific Robots

The rapid progress in AI models has made machines far more capable of perception and reasoning than even a few years ago. But that progress does not mean robots are suddenly ready to do everything humans can do.

In many cases, the limiting factor is not the intelligence of the software but the capabilities of the hardware itself. While AI systems are advancing rapidly, electro-mechanical systems like hands, joints, actuators, and tools still face significant challenges in dexterity, flexibility, and robustness.

While humanoid robots that can do everything a person can do are definitely a long-term goal, the imminent reality is that major breakthroughs are required before they can take a meaningful share of the market.

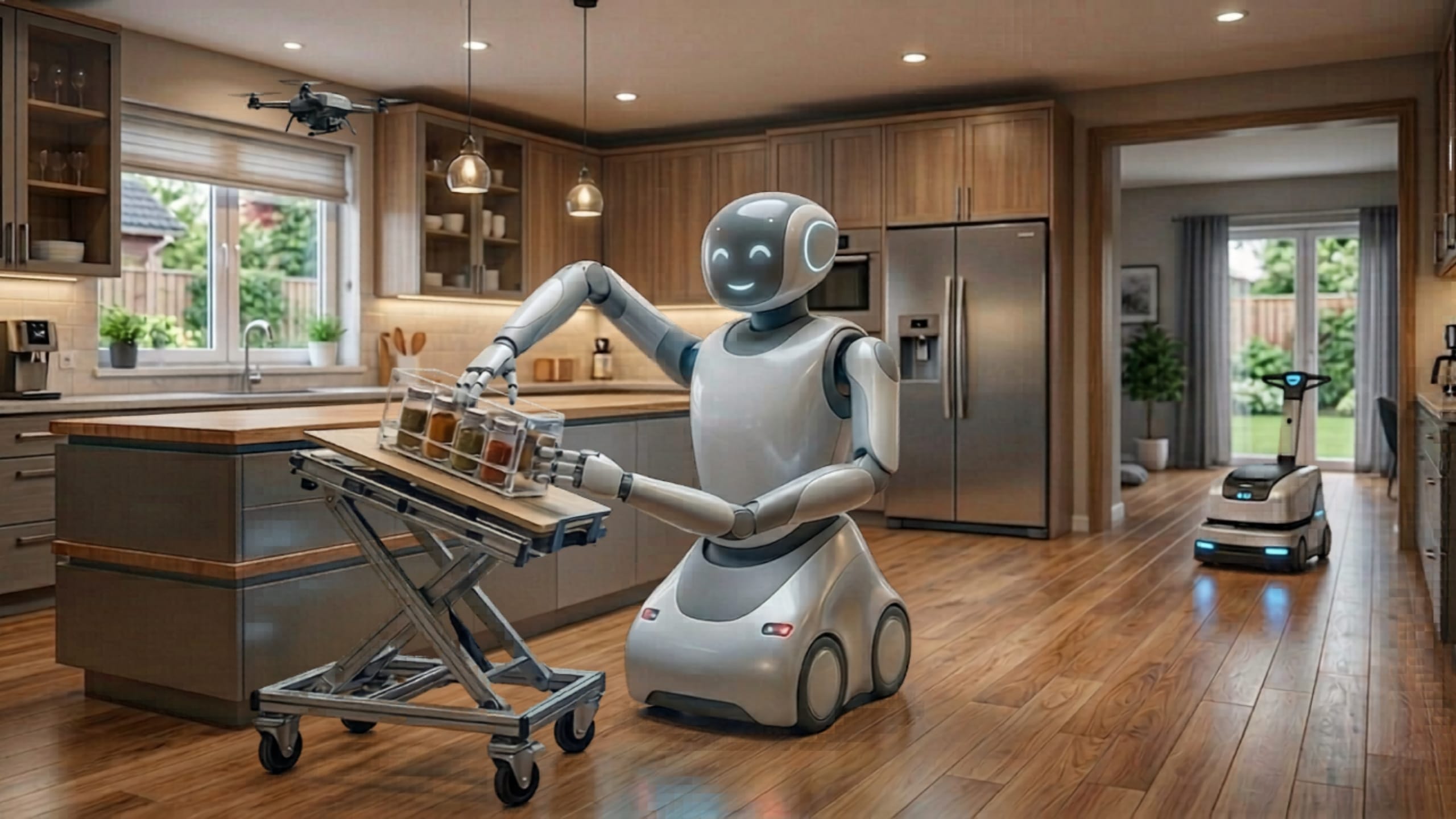

As a result, the near-term robotics landscape is unlikely to be dominated by general-purpose humanoids. Instead, the majority of robots will be task-specific systems designed to perform a defined set of tasks extremely well. A kitchen assistant robot, for example, may be able to slice, chop, mix, and clean a countertop, but not fold laundry or mop floors. These robots are not limited by a lack of intelligence, they are highly intelligent within their domain, but are intentionally constrained to deliver reliability, safety, and affordability.

Household robots such as vacuum cleaners and lawn mowers are early examples of this approach. Drones represent another rapidly evolving category. These machines already operate autonomously today, but advances in AI are allowing them to move beyond scripted behavior toward more intelligent interaction with their environment.

Scaling Physical Intelligence

Humanoid robots will continue to attract attention and investment, particularly for High-end niche markets which may justify higher costs. But they are likely to represent only a small fraction of the total robotics market in the foreseeable future.

Task-specific robots, on the other hand, are designed to handle tasks that are either dangerous, costly, or unattractive, and thus poised to appear everywhere: in homes, factories, warehouses, hospitals, retail spaces, public safety, and homeland security. These are high-volume markets, not niche or luxury segments, and robots need to be efficient, reliable, and cost-effective to deploy at scale.

Running advanced AI workloads on millions of devices requires processors capable of delivering high performance with low latency, low power consumption, and cost structures suitable for mass deployment.

In other words, the success of Physical AI will not depend solely on the largest models or the most powerful hardware in the cloud. It will depend on efficient edge platforms capable of running intelligent sense-think-act loops directly where machines interact with the real world.

The future of robotics is not about building a small number of machines that can do everything. It is about deploying millions of intelligent systems that can do the right things, extremely well – exactly where and when they are needed.