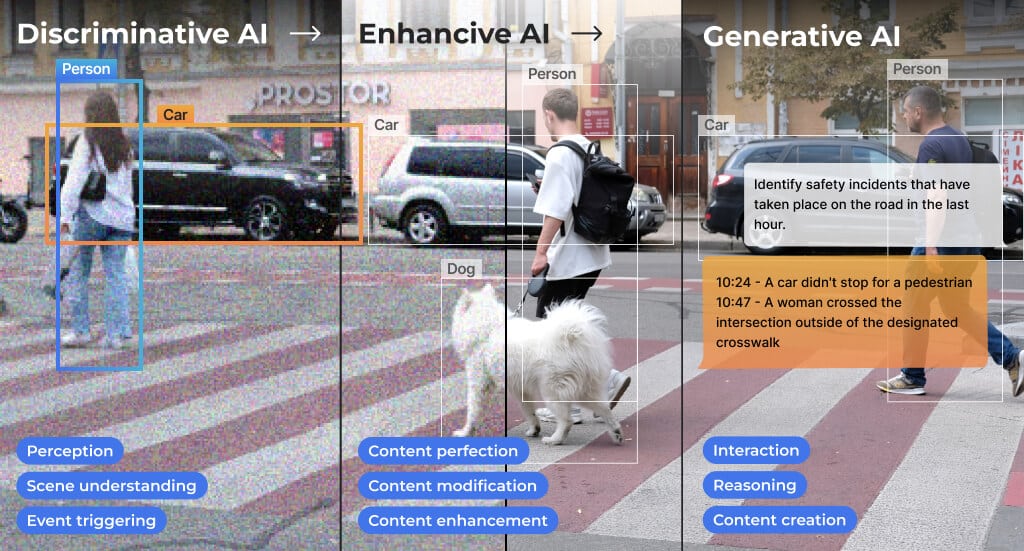

- ProductsGenerative AI AcceleratorsRecommended blog post

AI Vision ProcessorsRecent blog posts

AI Vision ProcessorsRecent blog posts AI AcceleratorsSoftware

AI AcceleratorsSoftware - ApplicationsAutomotiveSecurityIndustrial AutomationRetailPersonal ComputeAutomotiveRecommended blog postsSecurityDownload our e-Books

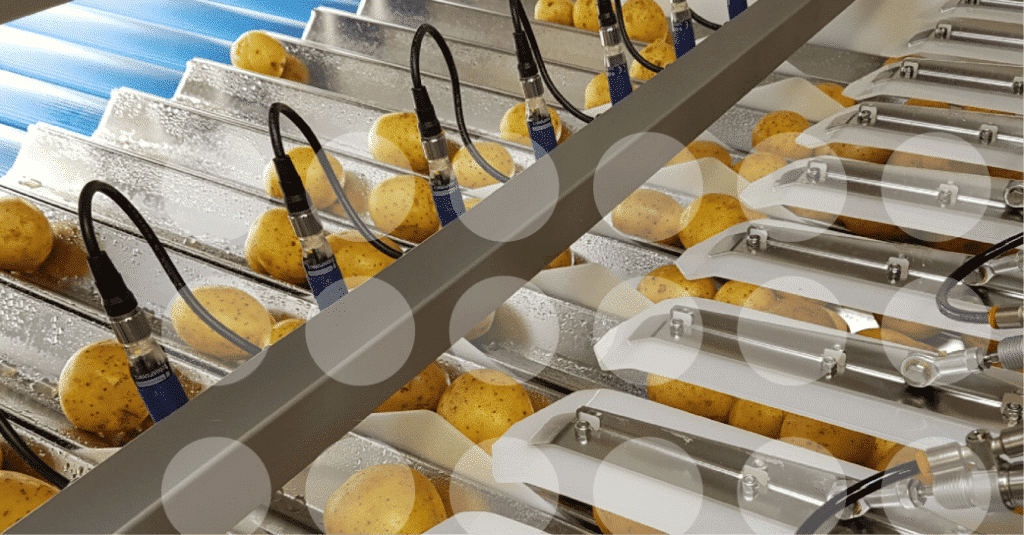

Industrial AutomationCustomer story

Industrial AutomationCustomer story RetailCustomer story

RetailCustomer story Personal ComputeRecommended blog posts

Personal ComputeRecommended blog posts - Resources

- Company

- Ecosystem